This week started and ended in very different places. On Monday I was feeling like I was hit by a truck as my body responded to the COVID and flu vaccines I got the day before. By Friday, I was riding high as my students blew past a goal I set for them this week.

Day 13

Cursive

To ensure students are getting the help they need, I pulled a small group this morning for additional guidance and practice with their cursive handwriting.

After complaining last week that cursive is hard to teach/support whole class, this week I’m breaking students into small groups. Some will practice previously learned letters while others move on to learning new letters. We’ll see how it goes!

Amplify CKLA

In today’s Amplify CKLA lesson, we revisited finding text evidence to support character traits.

Then we learned about the cause & effect text structure. We thought about examples from the first story we read “The Good Lie”, then we worked in pairs to find examples in our current story (the opening of Condoleezza Rice’s memoir).

I’m continuing to try out using timers to keep me on track with my Amplify CKLA lessons. I did pretty good with the first activity we did. I think we ran just a couple of minutes over.

After identifying examples of the cause & effect text structure in our reading, we attempted to write a short personal narrative using the same text structure. First, the students brainstormed stories about a time someone changed them. Then they planned & started writing.

I was not as successful keeping to my timer on this second activity in today’s Amplify CKLA lesson. I created my own models of all the steps of the writing process to help scaffold students. It meant they didn’t get to finish writing their paragraphs. That’ll have to be tomorrow!

Math

Teaching math sucked today. The biggest problem is that I felt like death warmed over because I got my flu shot and COVID booster yesterday. Another big reason has to do with the amount of content packed into this one math lesson. I will definitely be planning this differently for next year!

So, Lesson 1 in iReady Mathematics Classroom is called “Understand Place Value” and it even has two reasonable sounding learning targets. However, here’s everything my students had to learn:

1. All the place values in the thousands period

2. Writing 4-, 5-, and 6-digit numbers in standard form.

3. Writing multi-digit numbers in word form.

4. Identifying the value of a given digit

5. Writing multi-digit numbers in expanded form.

6. Representing multi-digit numbers in different ways. For example, 25,049 is also 250 hundreds + 4 tens + 9 ones, or 2,504 tens + 9 ones, or 25,049 ones.

7. The x10 relationship between place values.

8. Using the language “10 times as many” to describe relationships between particular digits.

Whew! And that’s all in ONE lesson. Keep in mind these students have only worked with numbers up to 999 before now. It’s supposed to take 3 days, but the topics are all just scattershot thrown in everyday. There’s no chunking or dedicated time to practicing just one of those things at a time.

I don’t feel like my class finished the third day of this lesson comfortable or confident in any one of the many skills in this lesson. This was not an auspicious start to a new year of math learning. (Feeling like I have the flu didn’t help!)

As an aside, someone on Mastodon asked me how I would plan this lesson differently in the future. Great question! When I do this again next year, here’s the flow I imagine I will follow:

- Day 1: Introduce BIG numbers with some sort of provocation, including reading and writing them in standard form and word form. (Technically, you don’t need to dive into individual place value to read and write these numbers, so I’d hold off on that.)

- Day 2: Extend the patterns of 10 ones –> 1 ten and 10 tens –> 1 hundred into the thousands period showing that the pattern repeats over and over. Then we’d practice writing numbers in a place value chart, naming the places that individual digits are in, and identifying the values of those digits based on their place. The culmination of this would be writing these numbers in expanded form.

- Day 3: I’d love to have a day to practice and consolidate all of this. Unfortunately, if I wanted to cover everything in the existing lesson, I’d have to spend time on day 3 on the “10 times as many” relationship. (Personally, I would prefer to save that for when we actually study multiplicative comparisons in a later lesson. It feels shoehorned in here. The language of “_ times as many” needs some work for students to make sense of it.)

The one topic I probably won’t include next year is representing numbers in different ways, such as 25,049 as 250 hundreds + 4 tens + 9 ones. That feels like a nice to know, but not a need to know particularly since there is no easy way to build a model to show students what’s happening. It’s just too big.

Day 14

Amplify CKLA

This morning, students finished writing their personal narrative paragraph using the cause and effect text structure. Then I had them underline the sentence(s) telling the cause in red and the sentence(s) telling the effect in blue.

Afterward, they read their story to a partner up to the point of sharing the cause. (The color coding they did helped them identify where they needed to stop reading.) Their partner then had to predict the effect.

One thing I appreciate in this first unit of Amplify CKLA is that my students are getting an opportunity to write a new paragraph every single lesson. At least I think that’s a good thing. It all feels like it’s moving really fast, but it is definitely helping build up their stamina for all the writing to come throughout the year. Rather than focus on quality, I’m really going hard on praising them for having pen to paper during the time I’m giving them to write. My hope is that quality will come over time with the many writing opportunities they’ll have across the school year.

After wrapping up our lesson on cause and effect, we moved into a new lesson that’s going to focus on sensory details. In the first activity, students practice summarizing a text. First, I asked for a volunteer to act out any actions they heard as I read a few paragraphs from “How to Eat a Guava.”

The rest of the class then identified the actions and recorded them.

Since our goal is a summary, we took all the actions and put them in order on a timeline.

I didn’t want to shortchange the next part, so we’ll do that tomorrow: Students will choose four actions we identified today and illustrate them to make a comic strip summary of the text we read. I look forward to seeing the finished results! I’m definitely happy for the opportunity to draw to add some variety to all the paragraph writing we’ve been doing.

Science

Today we finished a lesson we started last week where we continued investigating the question, “What is a system?” After observing a cherry pitter, making a simple electrical system, reading a book about different systems, and practicing identifying parts of a system, we were finally ready to tackle the guiding question. Here was my favorite response from a student: “A system is multiple parts working as one.”

I hated that I had to leave this lesson hanging for a few days before we got to do the wrap up today, but I’m glad I didn’t just skip it and move on. It was really important as the culmination of everything we did to develop an answer to our investigation question.

Now that we have a clearer understanding of what a system is, we’re beginning to explore electrical systems and specifically what electrical energy is used for. We’re going to use a digital simulation to help us explore our new investigation question.

I almost ran out of time before lunch though! The students were going to revolt if they didn’t get to try out the simulation I had just told them about. Good thing they were able to grab their computers quickly so they could explore for 5 minutes! Tomorrow we’ll spend more time exploring the simulation and talking about what they can do in it.

Math

Thankfully I’m feeling MUCH better today after my flu and COVID vaccines, and the quality of today’s math lesson was also markedly improved.

Instead of moving forward with the next lesson where we start comparing big numbers, I decided to give my students time to practice and consolidate some understandings today.

For example, when we talked about writing/saying a number in word form, I specifically called out the number of times you say the word “thousand.” (Once.)

Then I walked over to the place value chart and specifically called out the names of all the places and how the word “thousand” is written multiple times. (Thousands period, hundred thousands, ten thousands, thousands) I said I had a suspicion a lot of them were adding “thousand” after every other word because of what they were seeing on the place value chart. They agreed.

I went back over to the white board where I’d written “_____ thousand, _____” and said, “When we write or say a number in word form, we say the word ‘thousand’ one time. How many times do we say ‘thousand’?” And they replied, “One time.” I asked the question and they chorally repeated the answer a couple more times, and then we wrote a number in word form.

I got some peace of mind when I remembered that my class last year also struggled with all these place value skills they had to learn all at once. My strategy last year was to move on but to continue providing short practice opportunities, and they eventually got it.

Feeling like I got hit by a truck from my flu and COVID shots just really got me worked up yesterday. I’m glad I feel better today and have a clearer head about it.

Day 15

Amplify CKLA

Using the timeline of events we created yesterday from “How to Eat a Guava”, students chose four events and made a comic strip summarizing the story.

I loved these so much! The little attentions to detail were so delightful to see. There were thought bubbles, a tear, dashed lines showing what she was looking at, and a frown.

To top it off, I feel like I’m finally finding a good pace with Amplify CKLA. I’m getting about half a lesson done each day. I’ll take completing a lesson in 2 days instead of the 4 it was taking. At this rate, I’ll get through twice as much curriculum as I was going to get through!

Our next Amplify CKLA lesson is all about sensory details. For the first activity, students worked in pairs to read the first four paragraphs of “How to Eat a Guava” and gathered examples of sensory details for sight, touch, and taste.

I feel like the students are adjusting to Amplify CKLA. It demands a lot from them, and they’re rising to the occasion! (Don’t get me wrong. The work is still hard for a lot of them. Many of them have a lot of room for growth with regard to their writing, but in terms of participating and making an effort, they are rocking it!)

Science

We dove back into the digital simulation in science today to try and figure out which of the available devices has electrical energy as an input.

This was a challenging task. Halfway through, I could tell many of them were struggling because they had no idea what they were looking for. I loaded up the sim on my computer, and called them back together so we could study one example together. That helped focus them, and focus my feedback, for the rest of the time they explored.

Math

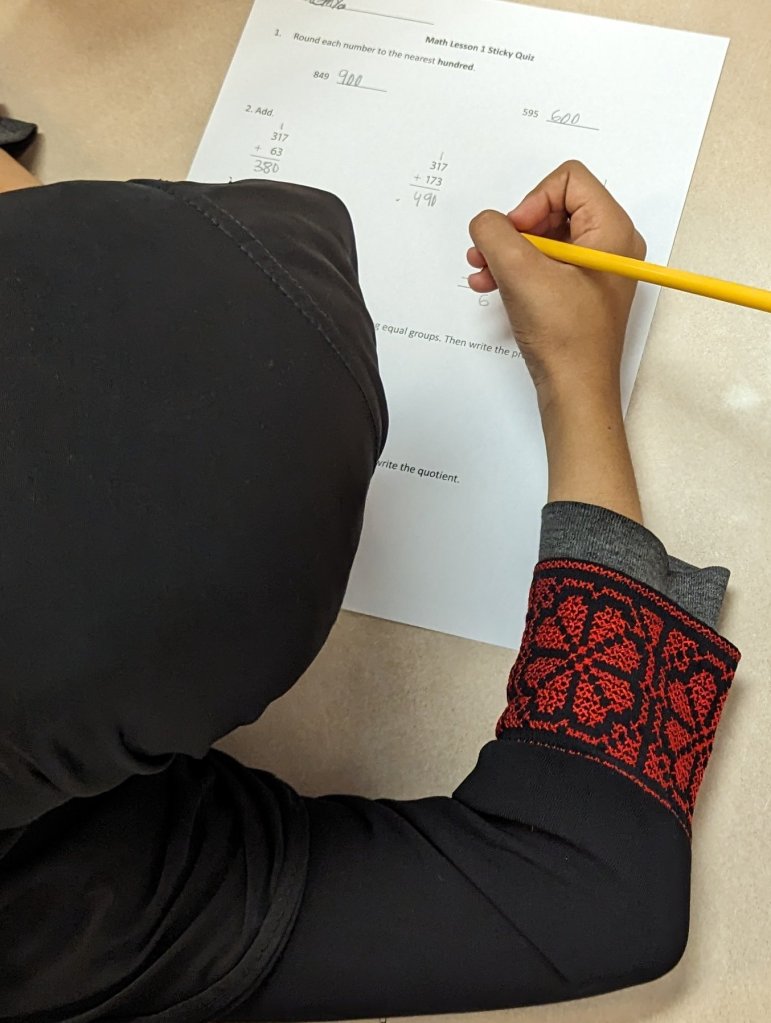

The past few nights, my students have been engaging in “sticky” practice at home to help make their math knowledge “stickier” in long-term memory. Today they took a “sticky” quiz in class to check their understanding of the skills they’ve been practicing.

We had just graded last night’s homework, so I left it on the board as a set of worked examples in case anyone needed help. Several students said, “I don’t know how to draw a picture for division.” I was able to say, “Here’s a great example that can help you.”

As we talked about today’s learning target in math, we revisited the meaning of “multi” and gave examples of multi-digit numbers.

It’s all these extra little things that I add that probably lead to me running short on time all the time, but they feel worth it.

My students were on fire when we moved into our warm up, the Same and Different routine.

I need to remember I want to color code similarities in one color and differences in another when I do this routine to help differentiate them for my students.

After making sense of this problem together, I asked students what they thought the answer was. Then they did a turn & talk with their partner before sharing out. They already know so much about how to use place value to compare numbers!

I like that the first session in any given lesson in iReady Mathematics Classroom only asks students to do just a few problems on the new topic. I was able to put some review questions from the place value lesson we just finished on the back of their practice page.

Day 16

Amplify CKLA

In today’s Amplify CKLA lesson, we started preparing to write a paragraph using sensory details. We warmed up by working together to generate sensory details about our classroom.

Then we launched into the first step of the writer’s process: brainstorming! Students were asked to brainstorm memories about food.

I find that these lessons assume my students are ready and raring to go with writing, and that’s… not the case. I don’t want to slow the lessons down too much, but I do want to scaffold more. So what I’ve been doing the same writing tasks as them to create models of each step of the process so they can see what finished work might look like before they get started. I feel like it’s lowered the anxiety a bit.

After brainstorming food memories, each student chose one food and wrote sensory details about it. They got excited when I told them we were going to play a game with their sensory details. When they were done, I used Milling to Music to get them up and moving. When they music stopped, they had to find a partner and read their sensory details. Their partner had to guess what food they were describing. They had so much fun!

When we were done, we debriefed:

Me: “What food did you guess?”

S: “I guessed it was broccoli.”

Me: “What sensory detail made you think he was writing about broccoli?”

S: “He said it looked like a tree.”

Me: “I love that!”

Math

As we checked our homework today, we used place value cards to verify the value of each digit as we rewrote a number from standard form into expanded form. Great opportunity to reinforce placeholder zeroes!

I got these cards to use this school year, but I’ve been so busy I failed to make use of them during the place value lesson where they would have been most beneficial! Thankfully place value is already showing up in our spiral review homework so they’re coming in handy now!

For today’s warm up, I asked, “Which one doesn’t belong?”

“D, because all the other numbers are 1,038, but that one isn’t. It’s 1,308.”

“I think D doesn’t belong either because the 3 is in the hundred place, but in the other numbers it’s in the tens place.”

We’re only on Day 16, and they’re getting so good at sharing their thinking with more and more sophistication. Can’t wait to see where they go from here!

Part of making sense of the math problem in today’s math lesson was wondering, “What questions could we ask about this situation?” I loved hearing all the different responses!

I was about to move on after a student shared, “Which one is less?” when, thankfully, a student tentatively raised his hand and said, “Couldn’t they also ask how many there are altogether?” Yes!

It’s important to know that we can often ask *multiple* questions about the *same* situation.

Today’s math problem was a great teaching opportunity about paying attention to what the question is asking. It could have asked for greater or fewer. It could have asked for a number, a comparison statement, or just words. We’re learning to pay close attention!

I didn’t emphasize this nearly enough last year.

I want my students to get immediate feedback when they’re practicing new skills. Today they checked their own work using an answer key after they finished page 1. That way they could identify any gaps in understanding before tackling page 2.

I sat at the back table with the answer keys to help answer questions as they tried grading their own work. It was a great opportunity to see how they were doing and to give personalized feedback in the moment.

Day 17

A Milestone!

Today we celebrated a HUGE milestone. Every day students earn points for following expectations and answering questions. This week I challenged them to reach 1,000 points by Friday, and they blew past our goal. I was so proud to celebrate their accomplishment!

I’m proud of myself, too! This is a new behavior management system for me. I worried I wouldn’t be able to remember to give out points while trying to teach at the same time. It’s taking practice, but I love that I have a quantitative measure showing just how much I’ve been praising my students. While I tend to give out between 70 and 100 points a day, I’ve only had to give out up to 5 strikes in a day.

Cursive

We’re nearing the end of learning the lowercase cursive letters. Today we learned the little loop group. I’m so proud of the time my students have put into practicing in class and at home. I can tell it’s paying off!

Once I get past the lower case letters, I feel like the hard part is done. The bulk of what we write are lower case letters. If all goes well, I’ll have taught them all by the end of next week!

Amplify CKLA

After reading just a portion of the text previously, today we read and discussed the entire essay “How to Eat a Guava.” Afterward, students worked in pairs to answer questions about the text.

This lesson was interesting because we’d read a paragraph, and then there were a few comprehension questions to ask. Rinse and repeat through most of the story. It wasn’t until after we moved on that I realized we never really talked about *why* she might have written this story. It’s a beautiful story, and I want my students to understand the “why” behind the story a little more.

Team Building Day

Today was Team Building Day at Iroquois! This is an annual tradition that happens early every school year. The purpose is to launch our CARE (Cooperation-Appreciation-Respect-Excellence) program in school and to have a lot of fun.

Yesterday I gave everyone a large index card. They had to write their name in the middle and then surround it with six names of students they’d like to be on a team with. I promised they would be on a team with at least *one* of the names on their card.

To find out their teams this morning, we did an activity called Barnyard Babble. Once students found out who was on their team, they had to make up a team name and team handshake.

Our first team building activity today was called Two Hands on a Pencil. Students worked in partners to draw a picture. The catch is that they couldn’t talk, and they *both* had to be holding the pencil while drawing.

This is one of my favorite activities! I love debriefing afterward. I love to hear their ideas about how they decided what to draw. Usually one partner takes on more of a leader role and the other willingly follows. Great opportunity to talk about compromise.

Our second team building activity today was the paper chain challenge. I was so impressed how close in length all of the finished paper chains were. Last year I had one team whose chain ended up being maybe a foot long? It was great to see that every team had so much success this year. They all did a great job!

Our final team building activity this afternoon was hand ping pong. Students worked in pairs to try to hit a ping pong ball on their desks as many times in a row as possible before it fell off or stopped moving. Such a fun Friday afternoon!

Silly and good fun.